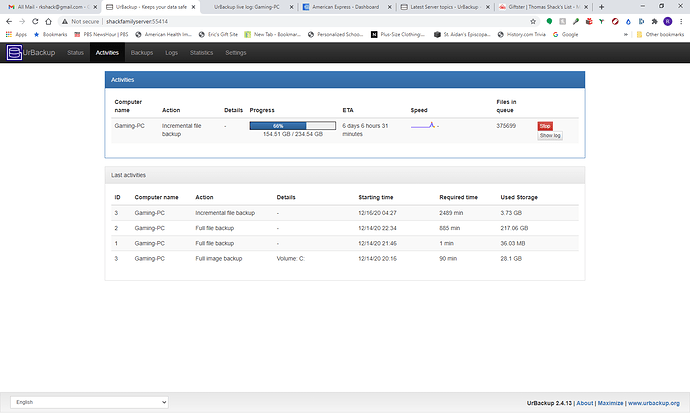

I have urbackup running on windows server 2019. I used to run urbackup on ubuntu so this set up is new. Image backup happen relatively quickly. But a file back up of 2 hard drives takes over a day The full backup took 885 minutes. The first incremental backup up took 2489 minutes. Any suggestions on how to proceed.

File operations are fairly slow compared to the block level operations used for disk images, particularly when dealing with large numbers of small files. Consider being picky with regards to what’s being backed up for file backups, sticking to user generated content; documents, photos, email etc. Having the UrBackup database files on an SSD allegedly helps.

Thoughts based on seeing the client is “Gaming-PC”:

For a drive containing games, or e.g. a steam library, which often contain masses of tiny files consider simply imaging it, or excluding those from file backups, .vhd files can be mounted & files copied out to restore individual items if needed.

I’m stuck with the slow speeds myself, my storage being NTFS & not supporting ReFS, I have to live with that penalty as I have a client backing up those types of things which I can’t image onto an NTFS store, since the source drive is over 2TB & UrBackup doesn’t support .vhdx or raw images to an NTFS target, only .vhd(z).

URBackup isn’t very clear about this, but in my case the limitation was server disk I/O. iotop showed me that I was maxing out my storage’s Write speed. I don’t have this issue on Macrium though because I guess I/O is forwarded directly to the storage repository (which in my case is an NFS share). Would be nice if support for this was officially enabled.

Edit: After further testing, I still don’t totally know what the bottleneck is. There’s no disk I/O that should have been maxed out based on what iotop was reporting to me, so the limit must be somewhere else.