Having an issue with one particular server that has issue backing up a data drive on a Windows Server 2012 R2 VM.

Client keeps erroring out at random times during the full backup. Sometimes it backs up 1 GB, 5 GB, etc.

Starting unscheduled full image backup of volume “E:”…

Basing image backup on last incremental or full image backup

Error retrieving last image backup. Doing full image backup instead.

Block sent out of sequence. Expected block >=2463216 got 0. Retrying…

Client disconnected before sending anything (Timeout: unavailable).

Transferred 9.22437 GB - Average speed: 91.9135 MBit/s

Time taken for backing up client TSR-VERIATO: 14m 23s

Backup failed

Debug log at the time shows:

2018-07-03 10:37:12: WARNING: Error deleting snapshot set {D61F6D7B-B229-4FE3-91C9-AE3FE5A65A7C}

2018-07-03 10:51:33: ERROR: Pipe broken -2

2018-07-03 10:51:33: ERROR: Pipe broken -4

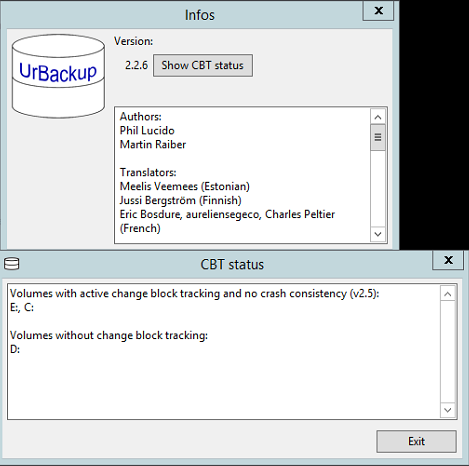

C: drive backs up without any issue and I can do incrementals on it without issue also.

E: drive (problem drive) has been wiped, deleted, removed and rebuilt.

Running urbackupclientbackend.exe with high priority and high I/O priority.

Is there a way to adjust the timeout settings on the urbackup client? Is this even the case?